The challenge was making the installation feel seamless while capturing biometric data to generate a personalized piece for each visitor.

We worked back and forth with the client to find a sculpture aesthetic that carried the house's core values of sensation, well-being, and fluidity. We applied generative and procedural techniques to keep the visual language consistent while still making every piece unique.

We worked back and forth with the client to find a sculpture aesthetic that carried the house's core values of sensation, well-being, and fluidity. We applied generative and procedural techniques to keep the visual language consistent while still making every piece unique.

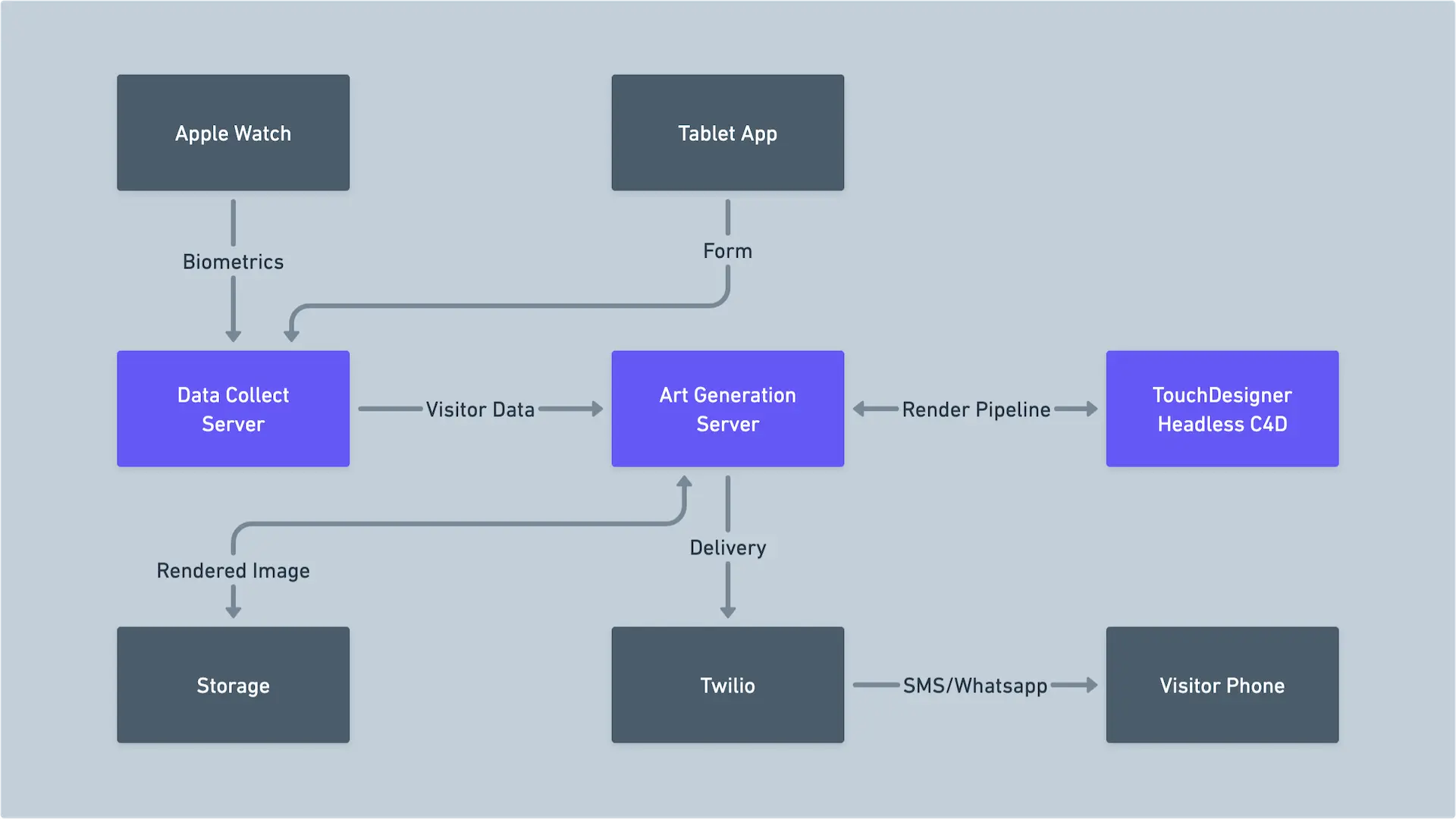

I helped define the project from the pitch stage and architected the system behind it, connecting capture, processing, rendering, and delivery into one pipeline.

Visitors wore an Apple Watch during a guided tour while the system recorded heart rate and 6-axis motion data in real-time. That data was later matched with the visitor's info from a tablet web app, so each person's path through the space could generate its own output.

The system was built across WatchOS, Vue.js, Node-RED, TouchDesigner, and Cinema 4D.

We engineered a distributed queue between the venue devices and the render machine, using Node-RED as the central orchestrator. TouchDesigner normalized the incoming data and generated the procedural geometry before Cinema 4D rendered the final image.

We engineered a distributed queue between the venue devices and the render machine, using Node-RED as the central orchestrator. TouchDesigner normalized the incoming data and generated the procedural geometry before Cinema 4D rendered the final image.

A few minutes later, the artwork was sent back to the visitor via Twilio SMS/WhatsApp. The result was a fully automated capture-to-phone pipeline that turned each tour into a premium personalized digital souvenir.